Captain Matthew Henry Phineas Riall Sankey sighed and shaded his tired eyes from the bright glare of the oil lamp. Its light reflected harshly from the jumbled mounds of papers that entirely covered the dark oak surface of his desk. He took a moment to roll down the wick and dim the light. The chaos of his work area hinted at the chaos currently roiling in his usually precise and meticulous engineer’s mind.

He leaned backward in his chair, his shoulders slumped in despair. This problem had defeated many other men before him, he reflected as he stroked his luxuriant moustache: there would be no shame in admitting defeat.

After all, he was only one man and he was attempting to face down the single most serious and most pressing scientific and engineering issue known to the world in the Victorian Era. And yet — he couldn’t help but feel that he was, somehow, close to solving it. The answer seemed to hover mirage-like in front of him, almost within his grasp but blurred and indistinct. It became as insubstantial as mist each time he reached for it. He needed a fresh perspective, a new way of looking simultaneously both at the whole and at the parts of the question. It was not so much a question of not seeing the wood for the trees, but rather seeing the wood, trees, twigs and leaves in sufficient detail at the same time.

And what was this problem that was occupying the finest scientific and technical minds at the close of the nineteenth century? It was simply this:

Make choo choo go faster

You think I jest. But no: in 1898 the world ran on the power of steam. Steam engines were the shining metal giants that laboured tirelessly where hundreds of millions of men and beasts had toiled in misery before. In less enlightened times, industry had rested on the backs of living things that strained and suffered under their load; now. however, it was built on the back of machines that felt no pain and could work day and night when fed with coal.

So much progress had been made over the years from the clanking primitive behemoths pioneered by Thomas Newcomen and James Watt. Those wasteful old engines had always teetered far too close to the edge of scalding catastrophe for comfort and demanded the tribute of a mountain of coal for a miserly hillock of work.

Modern steam engines were sleeker, safer and more efficient. But they still demanded too much coal for a given amount of work: somewhere, deep within their intricate web of moving parts, energy was wastefully haemorrhaging. No matter how much coal you loaded into the firebox or how hotly it burned, the dreadful law of diminishing returns worked its malevolent magic: the engine would accelerate to a certain speed, but no faster, no matter what you did. You always got less work out than you put in.

Captain Henry Phineas Sankey was searching for a tourniquet that would stem the malign loss of energy in the innards of these vital machines. He could not help but think of the wise words written by Jonathan Swift many long years ago:

Whoever could make two ears of corn, or two blades of grass, to grow upon a spot of ground where only one grew before, would deserve better of mankind, and do more essential service to his country, than the whole race of politicians put together.

What Captain Henry Phineas Sankey hoped to do was nothing less than reverse engineer the venerable Jonathan Swift: whereas previously a steam engine would burn two tons of coal to perform a task, he wanted to build an engine that would do the same work by burning only one ton of coal. That he hoped would be his enduring memorial both of his service to his country and to mankind.

But how to achieve this? How could one man hold in his head the myriad moving, spinning parts of a modern steam engine and ascertain how much loss there was here rather than there, and whether it was better to try and eliminate the loss here which might increase the weight of that particular part and hence lead to an unavoidably greater loss over there . . .

Captain Sankey’s restless eyes alighted on a framed drawing on the wall. It had been painstakingly drawn some years ago by his son, Crofton, and then delicately painted in watercolours by his daughter, Celia, when they were both still very young children. They had both been fascinated by the story of Napoleon’s ill-fated Russian Campaign of 1812. The drawing showed Charles Minard’s famous map of 1869.

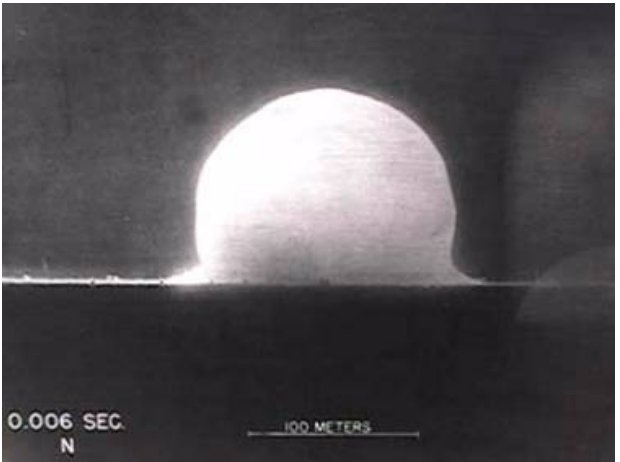

It showed the initial progress of Napoleon’s huge army as a wide thick band as they proudly marched towards Moscow and its gradual whittling down by the vicissitudes of battle and disease; it also showed the army’s agonised retreat, harried by a resurgent Russian military, and fighting a constant losing battle against the merciless ‘General Winter’. Only a few — a paltry, unhappy few — Frenchmen had made it home, represented by the sad emaciated black line at journey’s end.

Mrs Eliza Sankey had questioned allowing their children to spend so much time studying such a ‘horrible history’ but Captain Sankey had encouraged them. Children should not only know the beauties of the world but also its cruelties, and everyone should attend to the lesson that ‘Pride goeth before a fall’.

The map showed all of that. It was not just a snapshot, but a dynamic model of the state of Napoleon’s army during the whole of the campaign: from the heady joys of its swift, initial victories to its inevitable destruction by cruel attrition. It was a technical document of genius, comparable to a great work of art, for it showed not only the wood but the trees and even the twigs all at one time . . .

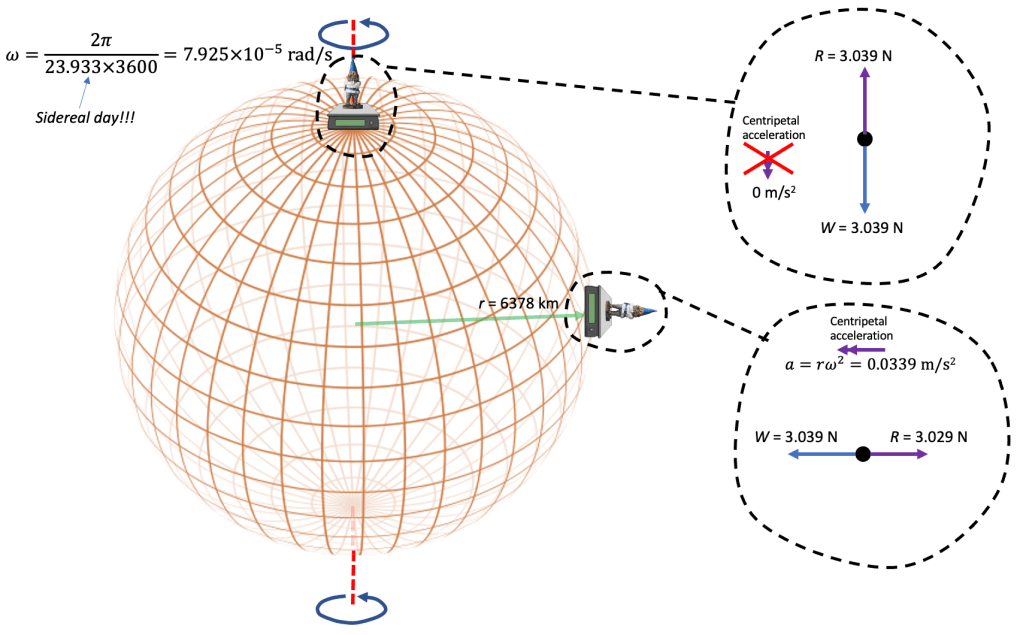

Captain Sankey started suddenly. He had an idea. Unwilling to spare even an instant in case this will ‘o the wisp of an idea disappeared, he immediately clipped a blank sheet of paper to his drawing board. He slid the T-square into place and began to draw rapidly. This is the work that Captain Sankey wrought:

Later that evening, he wrote:

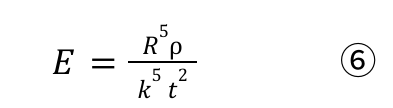

No portion of a steam plant is perfect, and each is the seat of losses more or less serious. If therefore it is desired to improve the steam plant as a whole, it is first of all necessary to ascertain separately the nature of the losses due to its various portions; and in this connection the diagrams in Plate 5 have been prepared, which it is hoped may assist to a clearer understanding of the nature and extent of the various losses.

The boiler; the engine; the condenser and air-pump; the feedpump and the economiser, are indicated by rectangles upon the diagram. The flow of heat is shown as a stream, the width of which gives the amount of heat entering and leaving each part of the plant per unit of time; the losses are shown by the many waste branches of the stream. Special attention is called to the one (unfortunately small) branch which represents the work done upon the pistons of the engine

Captain Sankey (1898)

The ubiquitous Sankey diagram had been born . . .

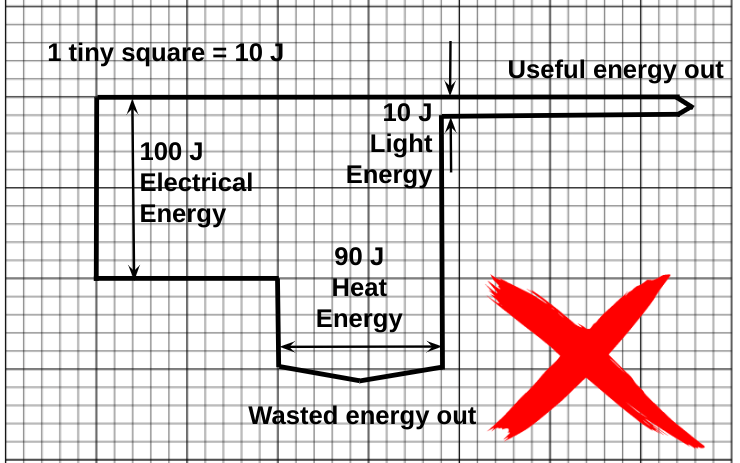

How NOT to draw a Sankey diagram for a filament lamp

Although this diagram draws attention to the ‘unfortunately small’ useful output of a filament lamp, and it is still presented in many textbooks and online resources, it is not consistent with the IoP’s Energy Stores and Pathways model since it shows the now defunct ‘electrical energy’ and ‘light energy’.

Note that I use the ‘block’ approach which is far easier to draw on graph paper as opposed to the smooth, aesthetically pleasing curves on the original Sankey diagram.

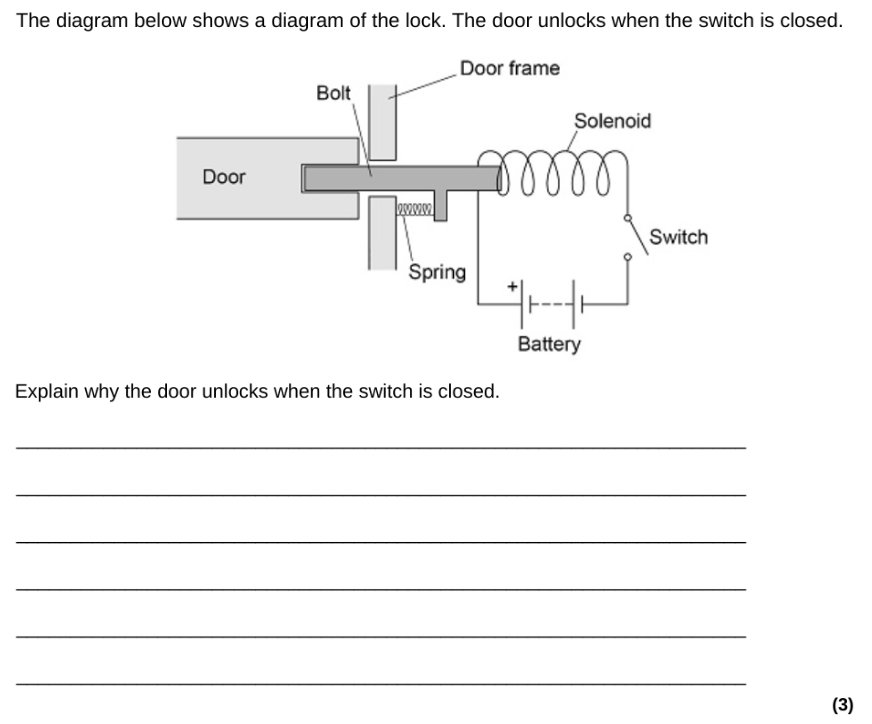

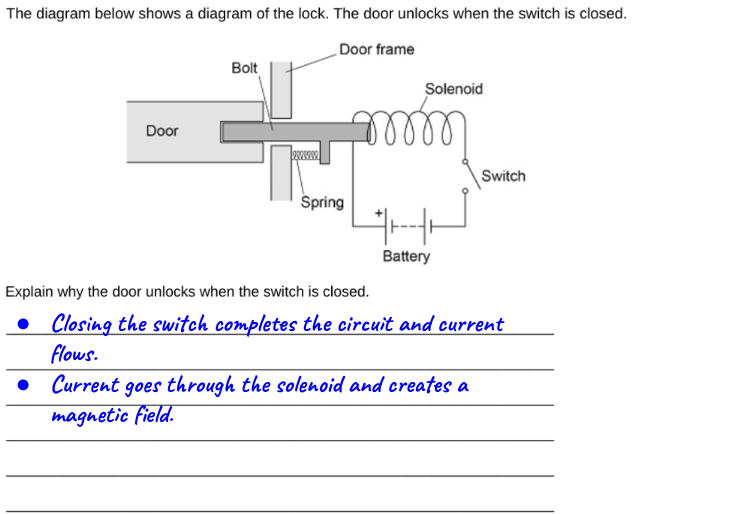

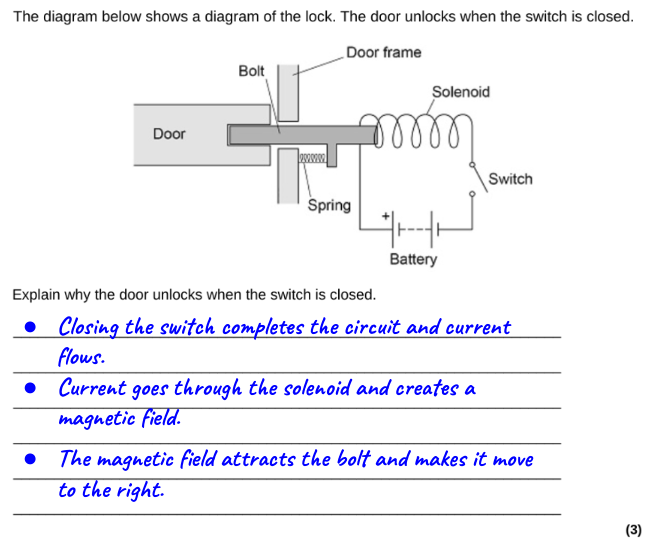

How to draw a Sankey diagram for a filament lamp

We can, however, draw a similar Sankey diagram for a filament lamp that is completely consistent with the IoP’s Energy Stores and Pathways model if we focus on the pathways by which energy is transferred, rather than on the forms of energy.

The second diagram, in my opinion, provides a much more secure foothold for understanding the emission spectrum of an incandescent filament lamp.

And, as the Science National Curriculum reminds us, we should seek to use ‘physical processes and mechanisms, rather than energy, to explain’ how systems behave. Energy is a useful concept for placing a limit on what can happen, but at the school level I think it is sometimes overused as an explanation of why things happen.

Closing thought

Stephen Hawking surmised that humanity had perhaps 100 years left on a habitable Earth. We are in a race to make a less destructive impact on our environment. ‘Reverse engineering’ Swift’s ‘two ears of corn where one grew before’ so that one joule of energy would do the same work as two joules did previously would be a huge step forward.

And for that goal, the humble Sankey diagram might prove to be an invaluable tool.